AFL源码阅读 - afl-as

main

1 | /* Main entry point */ |

- 首先从环境变量 AFL_INST_RATIO 中初始化 inst_ratio_str

- 判断是否为 quiet 模式,并设置 be_quiet

- 随后根据时间和 pid 进行随机种子的设置

- 然后进入 edit_params 根据 argc 和 argv 修改参数

- 检查 inst_ratio_str 是否存在,如果存在则检查是否在 0 - 100 之间,不是则抛出错误

- 判断是否设置了环境变量 AS_LOOP_ENV_VAR ,如果设置了则抛出错误,否则设置为 1

- 如果使用 ASAN 进行编译,则设置 sanitizer 并将 inst_ratio 除以 3

- 由于 afl 无法识别出 ASAN 的特定分支,因此会导致插入很多无意义的桩代码,所以直接暴力地将插桩概率缩小到原来的 1/3

- 如果不是只显示 version 的操作,则进入 add_instrumentation 函数,这也是实际的插桩函数

- fork 出子进程并使用 execvp 执行处理完的 as_params

- 因为我们在 execvp 的时候会使用 as_params[0] 来完全替换掉当前进程空间中的程序,如果不 fork 则无法进行接下来的 unlink

- 最后判断如果没有 AFL_KEEP_ASSEMBLY ,则 unlink 掉 modified_file

可以看得出 afl-as 的主要逻辑在 edit_params 和 add_instrumentation 中

edit_params

1 | /* Examine and modify parameters to pass to 'as'. Note that the file name |

根据最开始的代码注释可以知道,gcc 传递的最后一个参数始终是文件名,利用这个特性可以使得该代码变得更简单。

- 得到 afl-as 的位置,通过环境变量 AFL_AS

- 在 macos 下,当使用 clang 并没有环境变量 AFL_AS 的时候,会使用 clang -c 来代替 as -q 去编译汇编文件

- 依次从环境变量 TEMP 和 TMP 中获取临时目录的地址,如果都没有则设为 /tmp

- 为 as_params 申请大小为 (argc + 32) * sizeof(u8*) 的空间

- 将 as_params[0] 设为 afl_as 或默认值 “as”

- 通过 argc 遍历 argv ,主要有以下几个关注点:

- 如果是 macos ,检查 -arch 后跟的是 x86_64 还是 i386 并设置架构,如果都不是则抛出错误

- 如果是其他,则检查是否有 –64 或 –32 来设置架构

- 如果是 clang 则跳过 -q 和 -Q

- 如果是 clang ,添加 -c -x assembler 选项

- 检查 input_file(最后一个参数) ,如果以 - 开头

- 是 -version 的话直接跳到最后返回,在之后做 version 的查询

- 不是 -version 的话则抛出异常

- 如果 input_file 不以 - 开头,则将它和 tmp_dir 、/var/tmp/ 和 /tmp/ 进行比较,如果不相同则设置 pass_thru 为 1

- 将 modified_file 设为 tmp_dir + “/.afl-” + getpid() + “-” + (u32)time(NULL) + “.s” 格式

- 最后将 as_params 填充 modified_file 并返回

总的来说和 afl-gcc 中的 edit_params 所做的事情差不多

add_instrumentation

1 | /* Process input file, generate modified_file. Insert instrumentation in all |

主要的插桩代码。

- 打开 input_file 文件,获取输入流为 inf ,在没有权限的时候抛出异常。如果 input_file 为空则从 stdin 中读取

- 打开 modified_file ,获取输出流为 outf ,验证文件可否读写,并在没有权限的时候抛出异常

- 从 inf 中循环读取 MAX_LINE = 8192 字节并写入到 outf 中,并且进入真正的插桩逻辑中,这里的插桩只向 .text 段插入:

- 如果没有 pass_thru、skip_xxx 的标志信息,且满足其他的条件(最主要的是 instr_ok 和 instrument_next )和 line 的开头为 \t + digest ,则插入插桩的蹦床指令(trampoline_fmt)并清空 instrument_next

- 如果是 pass_thru 模式,则跳过

- OpenBSD 将跳转表直接内嵌到代码中,所以判别 p2align 指令并跳过

- 如果找到了 .text 段则设置 instr_ok 为 1 ,否则找到其他段设 instr_ok 为 0

- 通过几个判断来设置一些标志信息

- off-flavor assembly : .code32 或 .code64,设置 skip_csect

- AT&T : .intel_syntax 或 .att_syntax ,设置 skip_intel

- ad-hoc asm blocks : #APP 或 NO_APP,设置 skip_app

- 接下来是重要的部分,重点关注以下内容:

- ^main :main 函数

- ^.L0 :gcc 分支标记

- ^.LBB0_0 :clang 分支标记

- ^\tjnz foo :条件跳转分支标记

- 而不会去关注以下内容:

- ^# BB#0 :clang 注释

- ^ # BB#0 :同上

- ^.Ltmp0 :clang 非分支标记

- ^.LC0 :gcc 非分支标记

- ^.LBB0_0 :同上

- ^\tjmp foo :非条件跳转分支标记

- 根据标志信息、instr_ok 和是否开头为 # 或空格来判断是否继续

- 对于 \tj[^m] 的条件跳转指令,使用 R(100) 获得随机数并与插桩密度 inst_ratio 比较,如果小于则根据架构将插桩的蹦床指令(trampoline_fmt)写入 outf 中,并将插桩计数 ins_lines 增加后进行下一次遍历

- 检查该行中是否存在 “:” ,如果是 macos 则会检查多一点。然后检查是否以 “.” 开头

- 如果是则检查是否为 .L

/ .LBB 的格式,也就是分支标记,使用 R(100) 获得随机数并与插桩密度 inst_ratio 比较,如果小于则设置 instrument_next 为 1 - 如果不是则代表是一个 funciton 标记,直接设置 instrument_next 为 1

- 设置了 instrument_next 便会在下一次循环的时候插入蹦床指令(trampoline_fmt)

- 如果是则检查是否为 .L

- 一直循环直到读完 inf

- 如果插桩计数器 ins_lines 不为 0 ,就写入 main_payload 到 outf

- 关闭 inf 并打印一些信息

可以看得出来 afl-as 就是通过汇编的前导命令来判断是否为一个分支或函数,如果是则插入蹦床指令(trampoline_fmt)

另外插入蹦床指令(trampoline_fmt)的时候都会传入 R(MAP_SIZE),用于随机化 id

trampoline_fmt

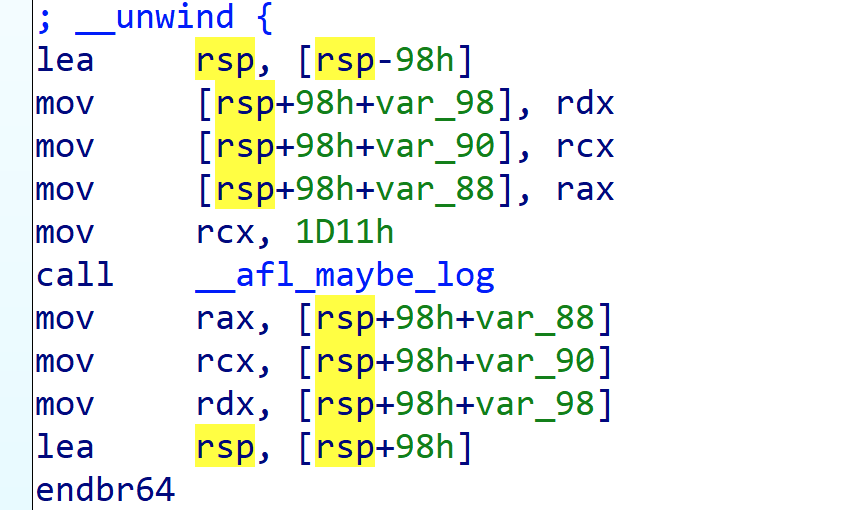

以 trampoline_fmt_64 为例来查看 trampoline_fmt :

1 | static const u8* trampoline_fmt_64 = |

其中这里 mov rcx, xxx 的 xxx 就是 R(MAP_SIZE),以此来标示和区分每个分支点

对应 IDA 中为:

main_payload

以 main_payload_64 为例来查看 main_payload :

1 | /* The OpenBSD hack is due to lahf and sahf not being recognized by some |

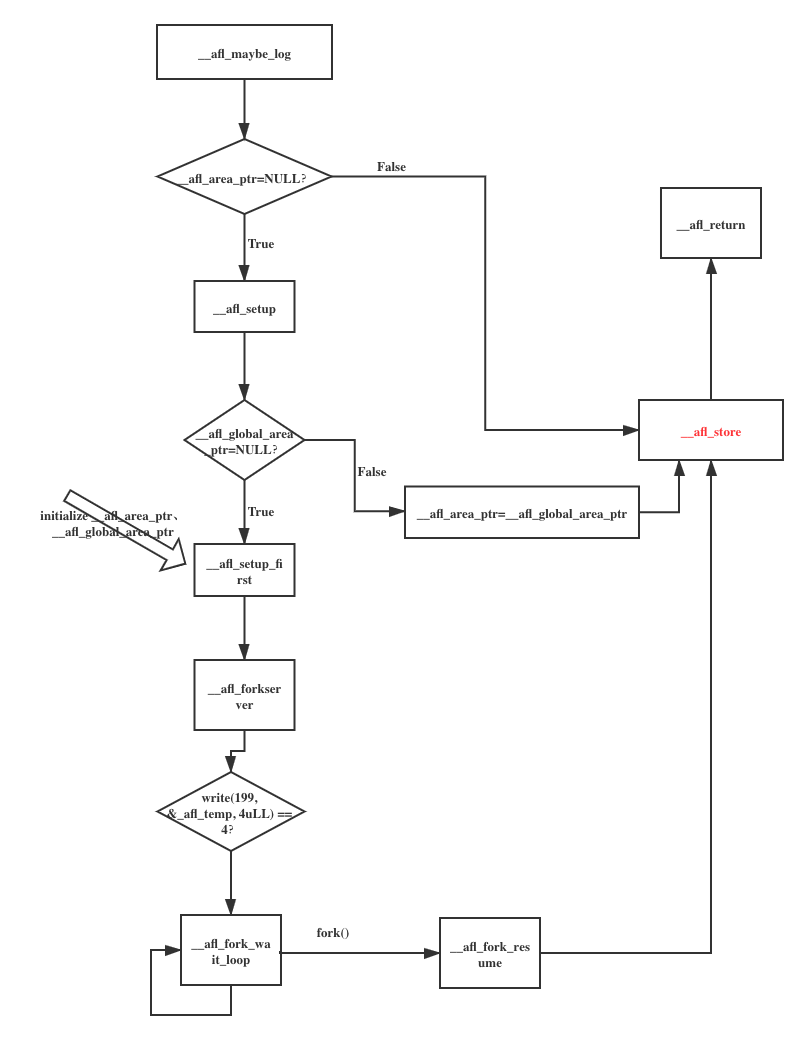

比较长,大致流程如下图:

分块进行说明

bss段的全局变量

1 | .AFL_VARS: |

__afl_maybe_log

1 | __afl_maybe_log: |

- 使用 lahf 指令加载状态标志位到 AH ,接着使用 seto 指令溢出置位

- 判断 __afl_area_ptr 是否初始化了

- 没有初始化则跳到 __afl_setup

- 初始化了则跳到 __afl_store

__afl_setup

1 | __afl_setup:\ |

- 如果 __afl_setup_failure 存在,则跳到 __afl_return 返回

- 如果 __afl_global_area_ptr 存在,则将 __afl_area_ptr 赋值为 __afl_global_area_ptr 并跳转到 __afl_store

- 否则跳转到 __afl_setup_first

__afl_setup_first

1 | __afl_setup_first: |

- 保存所有寄存器到栈上,包括 xmm 寄存器

- 将 rsp 进行对其

- 获取环境变量 __AFL_SHM_ID ,如果没有则跳到 __afl_setup_abort

- 调用 shmat(__AFL_SHM_ID, 0, 0) ,该函数把共享内存区对象映射到调用进程的地址空间,如果失败则跳到 __afl_setup_abort

- 存储返回的共享内存地址到 __afl_area_ptr 和 __afl_global_area_ptr 中

- 接下来走到 __afl_forkserver

__afl_forkserver

1 | __afl_forkserver: |

- 向 STRINGIFY((FORKSRV_FD + 1) 也就是 199 号描述符(即状态管道) 中写入 __afl_temp 的四个字节,告诉 fork server 已经成功启动

- 如果失败跳到 __afl_fork_resume 中,否则接下来进入 __afl_fork_wait_loop

__afl_fork_wait_loop

1 | __afl_fork_wait_loop: |

- 从 STRINGIFY(FORKSRV_FD) 即 198 中读四字节的数据,如果读取失败则跳到 __afl_die

- fork 一个子进程,失败则跳到 __afl_die ,如果是子进程则跳到 __afl_fork_resume

- 将子进程的 pid 赋给 _afl_fork_pid ,向 STRINGIFY((FORKSRV_FD + 1)) 即 199 写入四字节的 _afl_fork_pid

- waitpid 等待子进程执行完成,向 STRINGIFY((FORKSRV_FD + 1)) 即 199 写入四字节的 _afl_temp

- 重新执行下一轮的 __afl_fork_wait_loop

__afl_fork_resume

1 | __afl_fork_resume: |

- 关闭子进程中的 198 和 199 的 fd

- 恢复子进程的寄存器状态

- 跳到 __afl_store

__afl_store

1 | __afl_store: |

- 将上一个桩点 _afl_prev_loc 的值和当前桩点的值 (R(MAP_SIZE))进行异或,使共享内存中对应的槽的值加一,然后将 _afl_prev_loc 设为 R(MAP_SIZE) >> 1

- 这里之所以要右移一位,是因为假设存在 A→A 和 B→B 这样的两个跳转,则无法区分(或者A→B 和 B→A)

- 这里的 MAP_SIZE = 64K ,存在碰撞问题,但是概率可以接受

__afl_setup_abort

1 | __afl_setup_abort: |

- 递增 _afl_setup_failure ,并恢复寄存器的值

- 跳到 __afl_return

__afl_return

1 | __afl_return: |

- 将 127 + al 返回回去

总结

整体而言过程总结如下:

- 初始化一些参数,包括共享内存,同时保存寄存器参数

- 只有一个 __afl_maybe_log 会进行这些操作

- 初始化成功后,向 199 文件描述符写四字节的值,告诉 afl-fuzz,fork server 启动成功

- 进入 __afl_fork_wait_loop 循环

- 从 198 文件描述符读四字节的值(代表 afl-fuzz 命令 fork server 新 fork 出一个进程来进行新的测试)

- fork 出子进程并执行测试用例,fork server 将子进程的 pid 写入状态管道告诉 afl-fuzz

- fork server 等待子进程运行结束,将结果保存至 _afl_temp 并写入状态管道告诉 afl-fuzz

- 进行下一次循环

- 如果共享内存已经被设置好了,则直接跳到 __afl_store 的逻辑

- 将上一个桩点 _afl_prev_loc 的值和当前桩点的值 (R(MAP_SIZE))进行异或,使共享内存中对应的槽的值加一,然后将 _afl_prev_loc 设为 R(MAP_SIZE) >> 1

All articles in this blog are licensed under CC BY-NC-SA 4.0 unless stating additionally.